Search

Microsoft Fabric

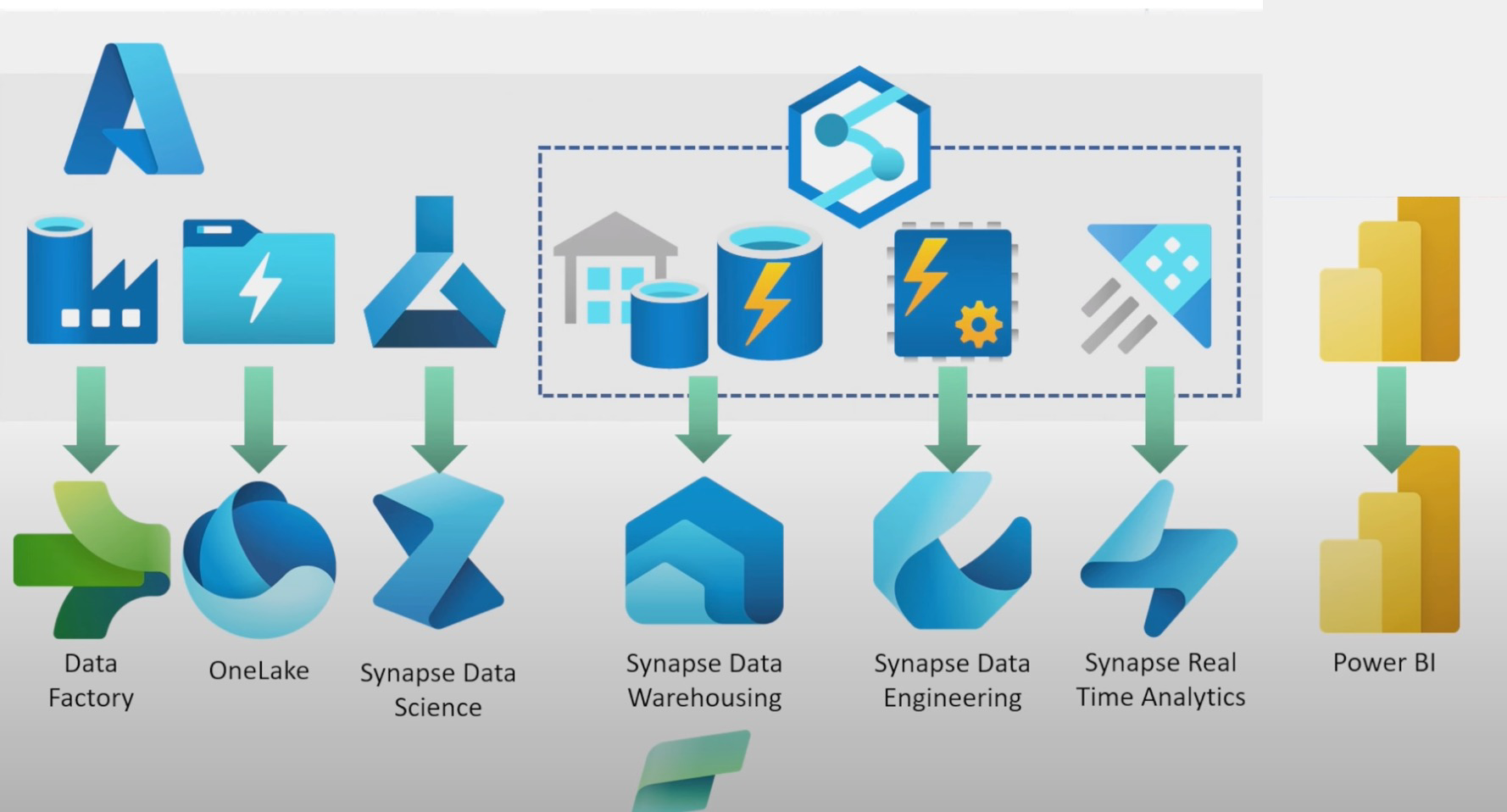

New announcement 2023-05-23 about the single place of MS; it’s like a Databricks replica, except for the tools in Azure without needing to go into Azure (see more below). See the announcement Introducing Microsoft Fabric and Copilot in Microsoft Power BI.

They also announced the integration into git for some Power BI artifacts (RW Introduction to git integration). But it’s not there yet as they exclude most of the things. It will probably take a long time until they are ready, as usually, MS products have lots of visuals inside the files as well (same as in the SSAS builder where all is XML with lots of IDs)

# Features

Can cross-cloud data analytics be easy? | Microsoft Fabric - YouTube

These features are possible due to an open Data Lake Table Format like Delta Lake, which they packaged into MS OneLake, with more security and integration (lineage, etc.) on top of it. Similar to what Databricks is doing with notebooks and Lakehouse.

You can combine different Query languages and computes. I know they use spark under the hood to wrap up, but for T-SQL, they might use a serverless SQL server, or Analysis Services, depending on what you need.

# Comments Simon Whiteley Video

See more from Simon Whiteley on Advancing Fabric - What is Microsoft Fabric? - YouTube.

Some comments and notes:

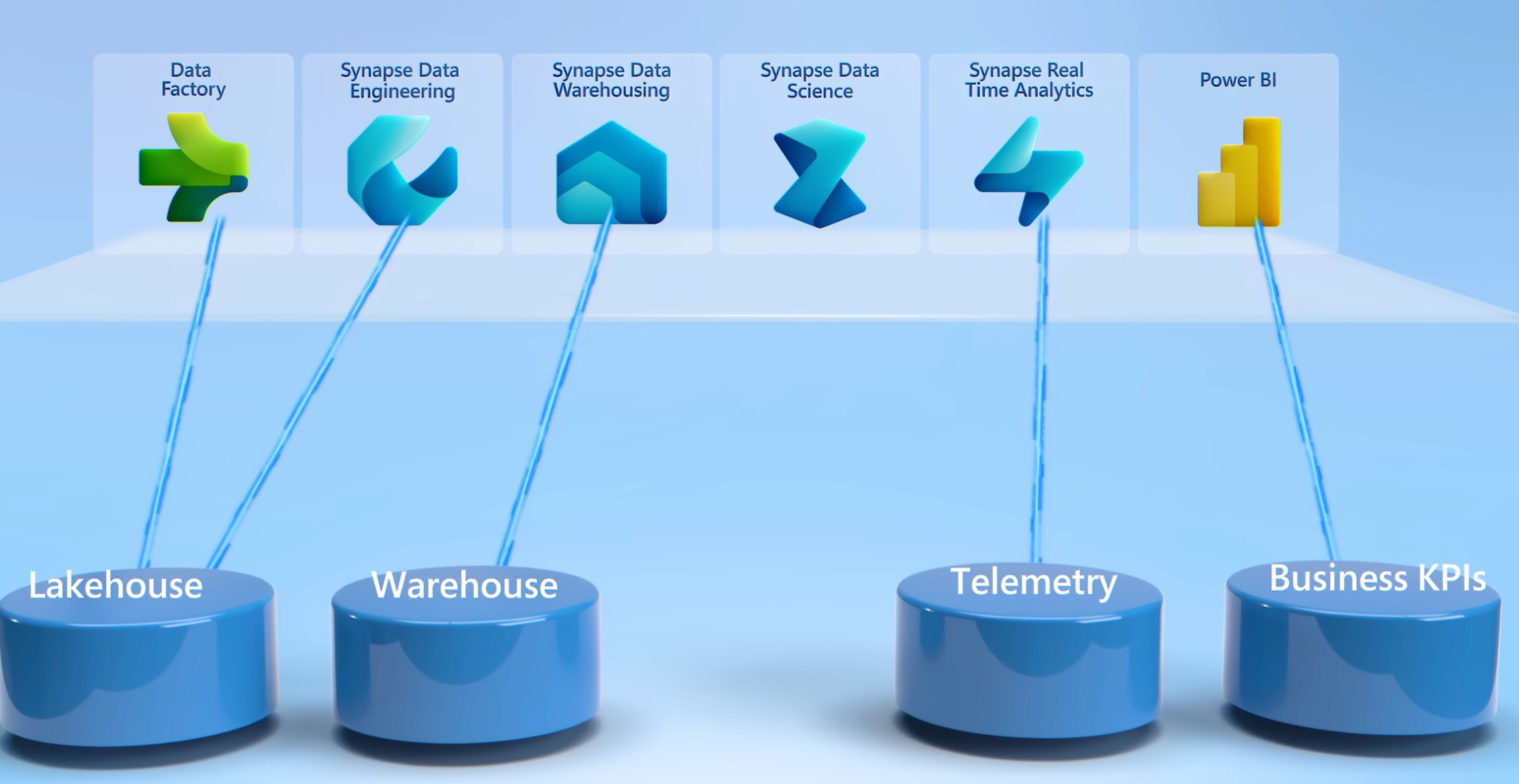

- it’s a sass service on top of Synapse, power Bi and more integrated with a new name, which is fabric

- what is different is the communication: They talk about Fabric the same way they talk about MS Excel.

- Spark in MS Synapse is now called Synapse Data Engineering

- His comment: it looks all nice: but will it be seamlessly integrated?

- In synapse everything was stored in different formats, a little SQL, a little delta, etc. The fantastic news is, that in Fabric, everything is Delta Lake!

- even if you write T-SQL and store the table, that will be a delta table.

- In synapse everything was stored in different formats, a little SQL, a little delta, etc. The fantastic news is, that in Fabric, everything is Delta Lake!

- There is no Azure, there is only SQL, Delta, and Spark. If logging into Fabric, you log into Power BI. It’s a software as a service, not AZURE. Wow!

# Lakehouse reference

Microsoft Fabric is basically a Lakehouse implementation by Microsoft. Others who have their own are Databricks with Delta, and Onehouse has theirs with Hudi calling it XTable. Dremio is also building one on Iceberg, and Snowflake is getting more open with Apache Iceberg.

Comparison to Databricks (User Experience)

This user got quite frustrated as of 2025-02-04 with the forced move to Fabric, coming from Databricks: Considering resigning because of Fabric : r/dataengineering.

# Declarative Metrics or Business Logic

I wonder, where is the business logic stored within Power BI, or is there a Semantic Layer? –> Tweet

Simon Whiteley:

Yeah, that’ll be your Power BI models most likely. You could have logical layers in either Lakehouse or Warehouse artifacts, but your metrics will be Power BI measures directly on top of the Delta Table objects.

Thomas Gremm on LinkedIn:

Mostly, in the transformations of the ETL/ELT jobs/dataflows/pipelines between the Delta tables, similar like in the Medallion Architecture known on the Databricks side.

The business logic spreads over certain products (Factory, Data Engineering, Synapse DWH etc.) in certain code (Spark, T-SQL, KQL)You can also put business logic into PowerBI data models (like DAX measures, calculation groups etc.), but then it should be handled like a Data mart.

The truth should be persisted in the Delta tables (with Parquet as underlying), which is analogous to the Gold layer in the Medallion architecture in Databrick’s logic (=the well-structured business presentation layer).

Maybe Tabular Model Definition Language (TMDL) is any good, it’s human readable.

# Engine

The Data Lakehouse part of Fabric is basically also just Spark or Python and Delta. The Data Warehouse part has it’s own proprietary engine which is actually quite interesting as it allows T-SQL and multi-table transactions on top of Delta. Patrick Pichler on LinkedIn

# Release

- 2024-11-26: With the recent release of pure Python Notebooks in Microsoft Fabric, the excitement about these lightweight native engines has risen to a new high. Source

# Why not

From Is it just me, or is Microsoft Fabric overhyped? : r/dataengineering as of 2025-03-11:

- No Local Development

- There’s no way to run a local Fabric instance and connect it to an IDE.

- Being forced to use the web UI for navigation is inefficient and unfriendly.

- Poor Terraform Support

- After 10 years of development, we’re still at step zero?

- Terraform, which is standard for infrastructure as code in data engineering, has almost no meaningful support in Fabric.

- Git Integration is Useless

- While Git integration exists, what’s the point if I can’t develop locally?

- Even worse, Azure Data Factory isn’t supported, which is a crucial tool for me.

- No Proper Function Support

- Am I really expected to run production pipelines in notebooks?

- This seems like a recipe for disaster. How am I supposed to test, modularize, and run proper code reviews?

- Notebooks are fine for testing, but they were never designed for running production ETL/ELT.

# History

-

Evolutionary History of Microsoft Fabric - Spreadsheets to Lakehouse - YouTube

- ? how much is VertiPaq still used?

Origin:

References:

Power BI